4D

Past work in the Cognitive NeuroSystems Lab, at that time the VR Lab, includes studies of search, navigation and object recognition in virtual environments with four spatial dimensions. The work on 4D computer graphics, search and navigation was performed by Greg Seyranian, whose doctoral dissertation details the behavioral experiments on search and navigation in 4D virtual environments (pictured at left, wearing a head-mounted display); Philippe Colantoni, whose work as a postdoctoral fellow included the development of a 4D level editor; Barb Krug, whose digital art made the virtual environments come to life, and D'Zmura. The experiments showed that observers readily learn to use the action-game-like interface developed and that they can easily code landmarks and perform path-based navigation and search throughout 4D environments. A second set of experiments tested whether users can take 4D rotations into account in a manner consistent with a higher form of navigation ability, for instance, path integration or navigation based on a mental map. Some but not all learned how to take the 4D rotations into account. Ma Ge worked as a graduate student on the related question of whether 4D virtual environments like these provide enough information for an observer to infer the correct shape of 4D objects. The answer is yes: the work on 4D structure from motion shows that observers are provided enough information to recover the shape of a 4D rigid object when viewed in either orthographic projection or in perspective.

|

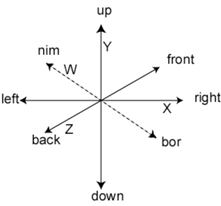

Four spatial dimensions were used in creating 4D virtual environments made to resemble as closely as possible those used in current 3D action games. The Y-axis was a vertical up-down axis along which acted simulated gravity. Three further spatial dimensions lie perpendicular to the Y-axis, effectively creating a floor of three dimensions rather than the normal two. These include the normal X-axis (left-right), Z-axis (back-front), and the fourth W-axis with directions nim and bor. At any one time, the computer graphic software uses OpenGL 3D graphics to display a 3D cross-section of the 4D environment. One sees the 4D layout only by turning or moving through the environment. |

|

|

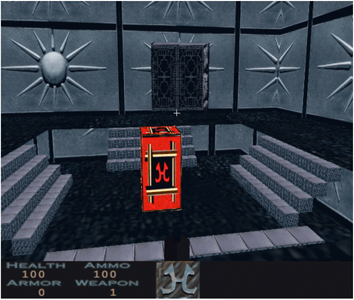

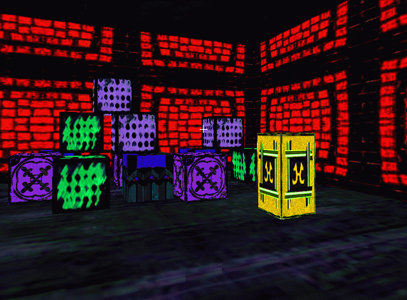

Two rooms in the earliest 4D level, used in search and navigation work. The task was straightforward: start in the home room with the red hyperbox (left), move throughout the environment to find the room with the yellow hyperbox (middle), and then go back to the home room as rapidly as possible. Can people learn to do this quickly? |

|

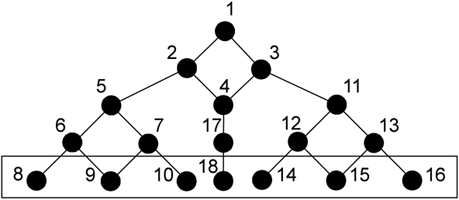

The 4D virtual environment pictured above had 18 rooms connected as shown in the graph at left. Observers were placed room (number 1) and had to make their way through the other rooms until they found the yellow hyperbox. The yellow hyperbox was placed randomly in one of the rooms indicated at the bottom of the graph (numbered 8 9, 10, 18, 14, 15 and 16). Upon finding the yellow box, the observer would touch it and then get back to the home room to touch the red hyperbox as quickly as possible. The time it took to get back to the home room was measured. These times decreased rapidly as observers learned the layout and features of the various rooms. |

|

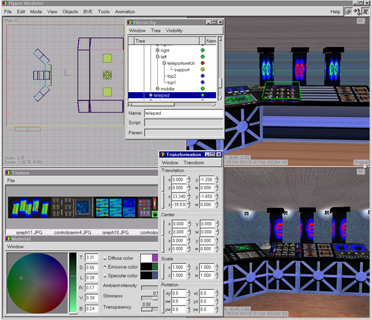

Philippe Colantoni wrote a 4D level editor that made the construction of more complex levels possible. Greg Seyranian and Barb Krug used this to create a 4D virtual environment with a spaceship theme. At left is shown a screenshot of the level editor with views of the spaceship teleport chamber. |

|

Shown is the helm of the spaceship virtual environment (VE). While the VE has four spatial dimensions, at any one instant only three of these are displayed: the graphics engine displays a 3D cross-section of the 4D VE. After some work, the robot "Woody" was able to walk around the VE and avoid colliding with the player. Click for a video of a walk through the spaceship (.rm realplayer format or .mpg mpeg2 format). |

A second set of experiments on search and navigation tested whether observers could learn to estimate accurately their position and orientation in a 4D virtual environment after moving through it. Knowledge of position and orientation goes beyond simply knowing an efficient path from point A to point B; knowledge of position and orientation suggest the possibility of path integration in 4D--complicated by the possibility of performing rotations in 4D--or the development of map-like knowledge. These experiments used sparse, maze-like levels with an initial home room and three further rooms connected by various sorts of turns. After making one's way to the final room, observers had to guess the direction in which lay the center of the initial home room and the as-the-bird-flies distance to that point. While all observers improved, only two of them demonstrated that they were able to take into account all varieties of 4D rotation available to them. Try a movie of a 4D maze trial. If you feel adventurous, a zipped version of the Hyper software (Windows/OpenGL) is available here. It is best to read the manual before using the software.

References

D'Zmura, M., Colantoni, P. and Seyranian, G. (2001). Virtual environments with four or more spatial dimensions. Presence 9, 616-631 [PDF].

Ge, M. and D'Zmura, M. (2003). 4D structure from motion: a computational algorithm. Bouman, C.A. and Stevenson, R.L. (Eds.) Computational Imaging (Proc. SPIE/IST) 5016, 13-23.